Multitenant KEDA: Multiple Scaling Engines, One Cluster

by Jan Wozniak

April 07, 2026

Introduction

Running a single KEDA operator per cluster works well until you need isolation between teams, customers, or environments that share the same cluster.

Maybe you’re a platform team offering autoscaling as a service to internal teams. Maybe you host workloads for multiple customers and a noisy neighbor’s misconfigured ScaledObject shouldn’t affect anyone else. Or maybe you simply need different KEDA versions or configurations for different parts of your cluster.

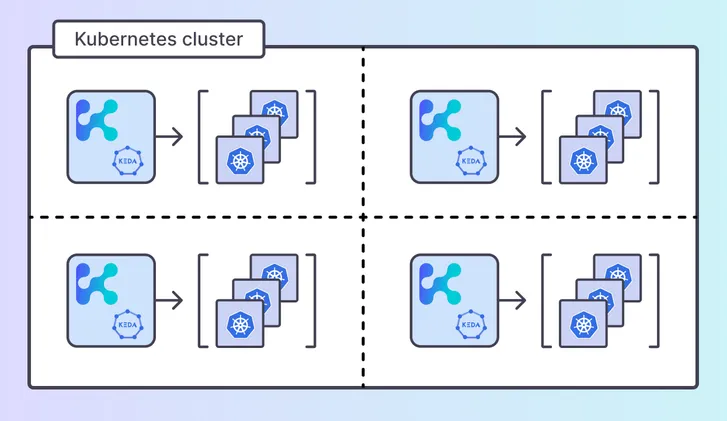

Today we’re introducing Multitenant KEDA - a new capability that lets you run multiple isolated KEDA operators inside a single Kubernetes cluster, each responsible for scaling workloads in its own set of namespaces. A single shared metrics adapter routes HPA requests to the correct operator automatically, with full mTLS isolation between tenants.

This feature is available as a tech preview today and will ship as a supported feature with a feature flag in Kedify releases after the KEDA 2.20 upstream release.

The Problem: Shared KEDA, Shared Risk

In a standard KEDA deployment, one operator handles all ScaledObjects across the cluster. This creates a few challenges for platform teams:

- Blast radius - a broken or excessively frequent trigger in one namespace can starve the operator’s reconcile loop, delaying scaling for everyone else

- Configuration coupling - operator-level settings (polling interval, log level, resource limits) are global, so one team’s requirements may conflict with another’s

- Upgrade risk - upgrading KEDA means upgrading it for every tenant at once, with no way to canary or roll back per tenant

- Noisy neighbor metrics - a single metrics adapter serving all HPAs means one tenant’s slow external metric source can add latency to another tenant’s scaling decisions

Multitenant KEDA solves these problems by giving each tenant its own KEDA operator while keeping the operational overhead of a single cluster.

Architecture

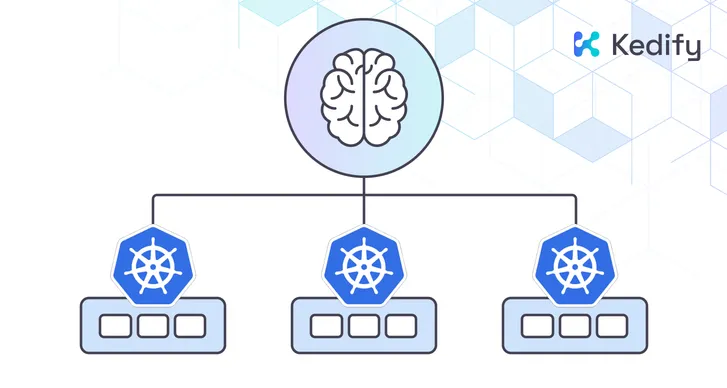

Multitenant KEDA introduces three components:

-

Tenant KEDA operators - each runs in the

kedanamespace but watches only its assigned target namespace(s). They are fully independent: separate deployments, separate TLS certificates, separate reconcile loops. -

Shared metrics adapter - a single

keda-operator-metrics-apiserverthat the Kubernetes API server calls for external metrics. It routes each HPA’s metric request to the correct tenant operator based on the ScaledObject’s namespace. -

Kedify agent is responsible for setting up the multitenancy internal configuration.

Under the hood, the flow looks like this:

- Each tenant KEDA operator registers itself by creating a ConfigMap labeled

kedify.io/tenant-registration: "true" - The kedify-agent discovers these ConfigMaps, reads TLS certs from each tenant’s secret, and syncs everything into a single configuration secret

- The metrics adapter watches this secret, builds a namespace-to-tenant routing table, and establishes mTLS gRPC connections to each operator

- When an HPA asks for metrics from namespace

foo, the adapter routes the request to the operator watchingfoo

Each tenant operator only sees ScaledObjects in its own namespace. The mTLS certificates are unique per tenant, so even the gRPC transport is isolated.

Autoscale with confidence, even in shared clusters.

Try multitenant KEDA with Kedify today.

Get StartedScaling KEDA with Operator Sharding

A single KEDA operator works well for small to mid-size clusters. But as the number of ScaledObjects grows into the hundreds or thousands, the operator’s single reconcile loop becomes the bottleneck. Each ScaledObject means another set of metric queries, HPA updates, and status writes, all serialized through one controller. At around 1000+ ScaledObjects, you may start to see increased reconcile latency, delayed scaling decisions and impact of rate-limiting.

Multitenant KEDA addresses this directly: instead of one operator handling every ScaledObject in the cluster, you shard the work across multiple operators, each watching a subset of namespaces. If you have 3000 ScaledObjects spread across 30 namespaces, you might run three operators each watching 10 namespaces, cutting the per-operator load by a third. Or 30 operators, each handling their dedicated namespace. You can deploy multiple keda-operators in a single namespace, keda-operator per namespace, or any combination of both deployment topologies.

This is also where tenant isolation becomes a practical benefit rather than just an organizational one. A tenant running a trigger that makes slow HTTP calls to an external API won’t delay scaling for tenants served by a different operator. Each operator maintains its own reconcile queue, metric connections, and goroutine pool.

To set up the default operator with multitenancy enabled:

# Install the default KEDA operator in multitenant modehelm upgrade --install keda oci://ghcr.io/kedify/charts/keda --version v2.19.0-0-mt \ --namespace keda --create-namespace \ --set watchNamespace=keda \ --set kedify.multitenant.mode=defaultThis installs KEDA as usual, but with the multitenant routing layer enabled in the metrics adapter. On its own, it behaves identically to a standard single-tenant KEDA deployment. The difference is that it can now coordinate with additional tenant operators.

mTLS Certificate Management

Each tenant KEDA operator generates its own TLS certificate pair (managed by KEDA’s built-in cert rotation). The kedify-agent reads these certificates and distributes them to the metrics adapter, which uses them to authenticate gRPC connections to each operator.

Certificate rotation is handled automatically:

- The agent periodically checks for certificate changes (hash-based comparison)

- Updated certificates are synced to the shared configuration secret

- The metrics adapter detects the secret change via filesystem watch and reloads TLS credentials without a restart

This means certificate rotation is seamless - no downtime, no manual intervention, no coordinated rollouts.

Getting Started (Tech Preview)

Since this is a tech preview, setup involves deploying the tenant operators via Helm. Here’s a walkthrough for a cluster with two tenants (foo and bar) plus the default tenant.

1. Install the kedify-agent with multitenancy enabled:

helm upgrade --install kedify-agent oci://ghcr.io/kedify/charts/kedify-agent --version v0.5.1-mt \ --namespace keda --create-namespace \ --set agent.features.multitenantKEDAEnabled=true \ --set agent.orgId="****" \ --set agent.apiKey="****"The agent watches for tenant registration ConfigMaps and syncs TLS certificates to the metrics adapter.

2. Install the default KEDA operator in multitenant mode:

helm upgrade --install keda oci://ghcr.io/kedify/charts/keda --version v2.19.0-0-mt --namespace keda --create-namespace \ --set kedify.multitenant.mode=default \ --set watchNamespace=keda3. Deploy additional KEDA operators for each tenant:

# Install KEDA operator for tenant "foo", watching namespace "foo"helm upgrade --install foo oci://ghcr.io/kedify/charts/keda --version v2.19.0-0-mt --namespace keda \ --set kedify.multitenant.mode=tenant \ --set watchNamespace=foo \ --set operator.name=keda-operator-foo \ --set serviceAccount.operator.name=keda-operator-foo \ --set certificates.secretName=kedaorg-certs-foo \ --set crds.install=false \ --set metricsServer.enabled=false \ --set webhooks.enabled=falseEach tenant operator runs as a separate deployment in the keda namespace but only reconciles ScaledObjects in its target namespace. Under the hood, each keda chart in multitenant mode creates a registration ConfigMap that the agent discovers.

The tenant install disables three components that are already handled by the default installation:

- CRDs - cluster-scoped resources like

ScaledObjectare already installed by the default release. Installing them again would create ownership conflicts. - Metrics server - there’s a single shared metrics adapter that routes HPA requests to the correct tenant. Each tenant doesn’t need its own.

- Webhooks - the admission webhook (ValidatingWebhookConfiguration) is cluster-scoped and already validates ScaledObjects for all namespaces.

Repeat for each additional tenant with a unique release name, operator.name, service account, and certificate secret name.

4. Create ScaledObjects in tenant namespaces as usual, they’ll be picked up by the correct operator automatically.

What’s Next

Multitenant KEDA is available as a tech preview today. After the KEDA 2.20 upstream release, it will ship as a supported feature in Kedify releases behind a feature flag.

If you’re running a platform where multiple teams or customers share a Kubernetes cluster and need isolated autoscaling, we’d love to hear about your use case.

Try It Today

- Please book a demo to walk through a multitenant setup tailored to your environment.

- Check the multitenant KEDA documentation for detailed configuration and architecture reference.

Built by the core maintainers of KEDA. Designed for teams that scale with confidence.